“Making a conservative case for alignment” by Cameron Berg, Judd Rosenblatt, phgubbins, AE Studio

Manage episode 450550231 series 3364758

เนื้อหาจัดทำโดย LessWrong เนื้อหาพอดแคสต์ทั้งหมด รวมถึงตอน กราฟิก และคำอธิบายพอดแคสต์ได้รับการอัปโหลดและจัดหาให้โดยตรงจาก LessWrong หรือพันธมิตรแพลตฟอร์มพอดแคสต์ของพวกเขา หากคุณเชื่อว่ามีบุคคลอื่นใช้งานที่มีลิขสิทธิ์ของคุณโดยไม่ได้รับอนุญาต คุณสามารถปฏิบัติตามขั้นตอนที่แสดงไว้ที่นี่ https://th.player.fm/legal

Trump and the Republican party will yield broad governmental control during what will almost certainly be a critical period for AGI development. In this post, we want to briefly share various frames and ideas we’ve been thinking through and actively pitching to Republican lawmakers over the past months in preparation for this possibility.

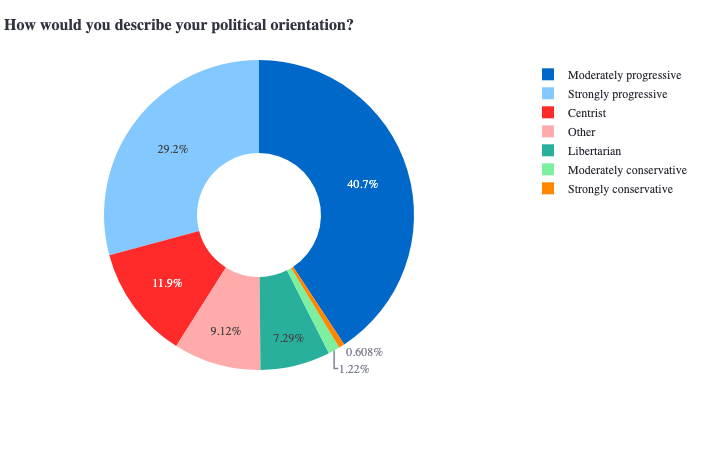

Why are we sharing this here? Given that >98% of the EAs and alignment researchers we surveyed earlier this year identified as everything-other-than-conservative, we consider thinking through these questions to be another strategically worthwhile neglected direction.

(Along these lines, we also want to proactively emphasize that politics is the mind-killer, and that, regardless of one's ideological convictions, those who earnestly care about alignment must take seriously the possibility that Trump will be the US president who presides over the emergence of AGI—and update accordingly in light of this possibility.)

Political orientation: combined sample of (non-alignment) [...]

---

Outline:

(01:20) AI-not-disempowering-humanity is conservative in the most fundamental sense

(03:36) Weve been laying the groundwork for alignment policy in a Republican-controlled government

(08:06) Trump and some of his closest allies have signaled that they are genuinely concerned about AI risk

(09:11) Avoiding an AI-induced catastrophe is obviously not a partisan goal

(10:48) Winning the AI race with China requires leading on both capabilities and safety

(13:22) Concluding thought

The original text contained 4 footnotes which were omitted from this narration.

The original text contained 1 image which was described by AI.

---

First published:

November 15th, 2024

Source:

https://www.lesswrong.com/posts/rfCEWuid7fXxz4Hpa/making-a-conservative-case-for-alignment

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Why are we sharing this here? Given that >98% of the EAs and alignment researchers we surveyed earlier this year identified as everything-other-than-conservative, we consider thinking through these questions to be another strategically worthwhile neglected direction.

(Along these lines, we also want to proactively emphasize that politics is the mind-killer, and that, regardless of one's ideological convictions, those who earnestly care about alignment must take seriously the possibility that Trump will be the US president who presides over the emergence of AGI—and update accordingly in light of this possibility.)

Political orientation: combined sample of (non-alignment) [...]

---

Outline:

(01:20) AI-not-disempowering-humanity is conservative in the most fundamental sense

(03:36) Weve been laying the groundwork for alignment policy in a Republican-controlled government

(08:06) Trump and some of his closest allies have signaled that they are genuinely concerned about AI risk

(09:11) Avoiding an AI-induced catastrophe is obviously not a partisan goal

(10:48) Winning the AI race with China requires leading on both capabilities and safety

(13:22) Concluding thought

The original text contained 4 footnotes which were omitted from this narration.

The original text contained 1 image which was described by AI.

---

First published:

November 15th, 2024

Source:

https://www.lesswrong.com/posts/rfCEWuid7fXxz4Hpa/making-a-conservative-case-for-alignment

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

383 ตอน